|

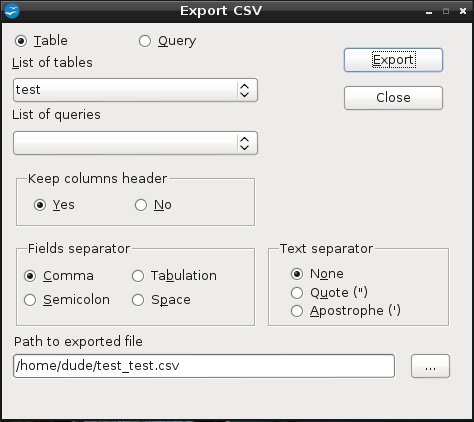

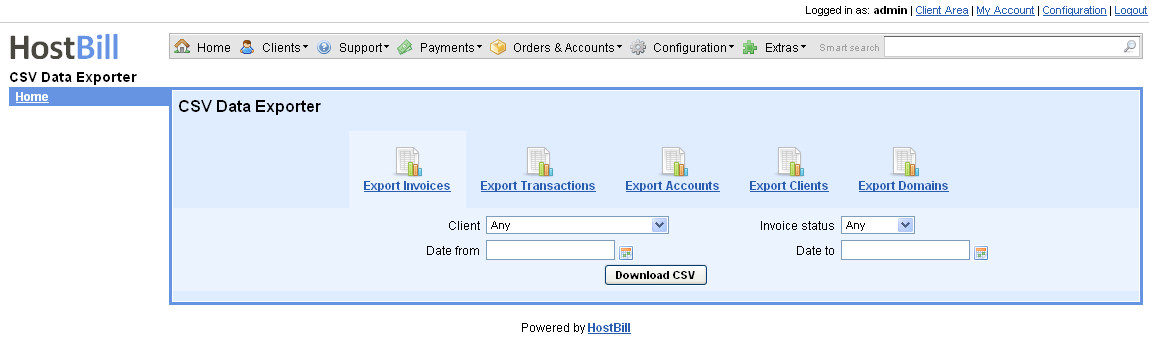

my query is below import elasticsearch import unicodedata import csv es elasticsearch.Elasticsearch ( '9200') this returns up to 100 rows. I have been looking everywhere to do this but all my attemps on this is failing on how to do it. Command line utility, written in Python, for querying Elasticsearch in Lucene query syntax or Query DSL syntax and exporting result as documents into a CSV. Now, create this logstash file csv.config, changing the path and server name to match your environment. I would like to export my elastic search data that is being returned from my query to a CSV file. Optimising millions of Elasticsearch query export to csv with pandas. Next, change permissions on the file, since the permissions are set to no permissions. By exporting the data from the PostgreSQL tables to CSV files, creating an Elasticsearch index, and importing the data into the index, you can easily. Once the CSV generation is completed, a message will appear with a link to the CSV. It will take a while for the CSV to be generated. Share menu -> Share this search -> CSV Reports -> Generate CSV. Use the right-hand menu to navigate.) Download and Unzip the Dataĭownload this file eecs498.zip from Kaggle. Step 1: Export data from PostgreSQL tables. If you want to manually download results of a kibana query into a CSV, that is possible. Why export data To store the data outside Elasticsearch and/or to manipulate the data with a tool other than Kibana.

Whats a CSV file CSV stands for comma-separated-values: a standard text file easily imported into any spreadsheet software. (This article is part of our ElasticSearch Guide. A CSV file of the selected data will be available to save/download from Kibana. Code : from elasticsearch import Elasticsearch import csv es. There are no heading fields, so we will add them. From the output you have given, I am assuming that you want to write data from source to csv file.

Here we show how to load CSV data into ElasticSearch using Logstash. Automated Mainframe Intelligence (BMC AMI).Control-M Application Workflow Orchestration.I'm taking about minimum of 1 million of records which needs to be exported. Accelerate With a Self-Managing Mainframe Optimising millions of Elasticsearch query export to csv with pandas Ask Question Asked 2 years ago Modified 1 year, 11 months ago Viewed 903 times 0 I'm trying to export large set of elasticsearch query results to csv with pandas.Apply Artificial Intelligence to IT (AIOps).Works with ElasticSearch 6+ (OpenSearch works too) and makes use of ElasticSearch's Scroll API and Go's concurrency possibilities to work as fast as possible. don't know if i should use the bulk API and if i can just insert a json version of my. Export Data from ElasticSearch to CSV by Raw or Lucene Query (e.g. Obj = s3.get_object(Bucket=BUCKET_NAME, Key=SOURCE_PATH)Īnd this is where i'm stuck.

csv to Elastic Search, here's what i got so far : import jsonįrom aws_requests_auth.aws_auth import AWSRequestsAuthįrom elasticsearch import RequestsHttpConnection, helpers, Elasticsearchįrom core_libs.dynamodbtypescasters import DynamodbTypesCasterįrom core_libs.streams import EsStream, EsClientĬredentials = boto3.Session().get_credentials()ĮS_SERVER = f" AWS_ACCESS_KEY = credentials.access_keyĪWS_SECRET_ACCESS_KEY = cret_keyĪws_secret_access_key=AWS_SECRET_ACCESS_KEY,īUCKET_NAME = record csv will be dropped into the S3 it'll trigger my lambda that will feed the data from the. When excludeExportDetailsfalse (the default) we append an export result details record at the end of the file after all the saved object records. Each exported object is exported as a valid JSON record and separated by the newline character n. csv files directly from a S3 bucket to elastic Search, each time a. The format of the response body is newline delimited JSON.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed